Rock Paper Scissors - Image Classification

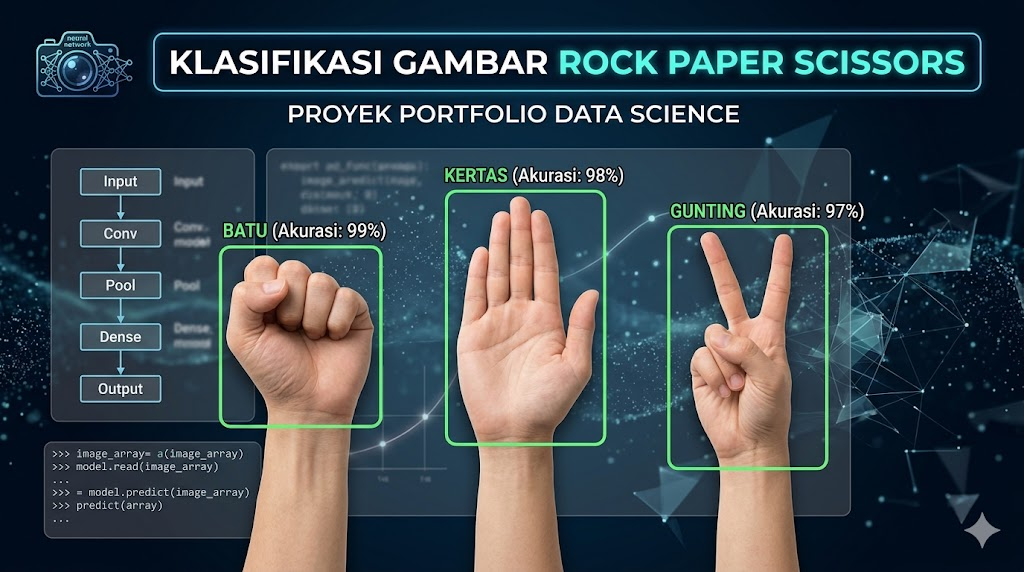

A foundational Computer Vision project developing a machine learning model capable of classifying hand gestures (rock, paper, scissors) using a Sequential neural network.

This project was developed as the final submission for the "Belajar Machine Learning untuk Pemula" (Learning Machine Learning for Beginners) course at Dicoding. The primary objective was to build a robust image classification model capable of accurately recognizing hand gestures representing rock, paper, or scissors. The development process involved a complete machine learning pipeline. It started with dataset preparation, including an optimal train-validation split. To ensure the model's ability to generalize and prevent overfitting, extensive Image Augmentation techniques were implemented using Image Data Generators. The core of the project is a custom Sequential model architecture that was trained to achieve an accuracy of over 85%. Furthermore, the project includes an interactive prediction feature within Google Colab, allowing users to upload custom images to be classified by the trained model in real-time.

Technologies Used

Key Features

- Image Augmentation: Implementation of data generators to artificially expand the training dataset and improve model robustness.

- Sequential Model Architecture: Built using deep learning frameworks tailored for image feature extraction.

- High Accuracy Output: Successfully trained to achieve an accuracy rate exceeding 85% with under 30 minutes of training time.

- Interactive Image Prediction: Built-in widget functionality to test the model with newly uploaded user images.